The main takeaway from his talk is to use behaviour trees only after high level decisions have already been made, where their ability for parallel execution of tasks can supplement the cyclic logic of state machines. The rest of this post describes how his approach would integrate with previous camera implementations I have discussed, and applies this new knowledge to guidance of future experiments with behaviour trees on cameras.

|

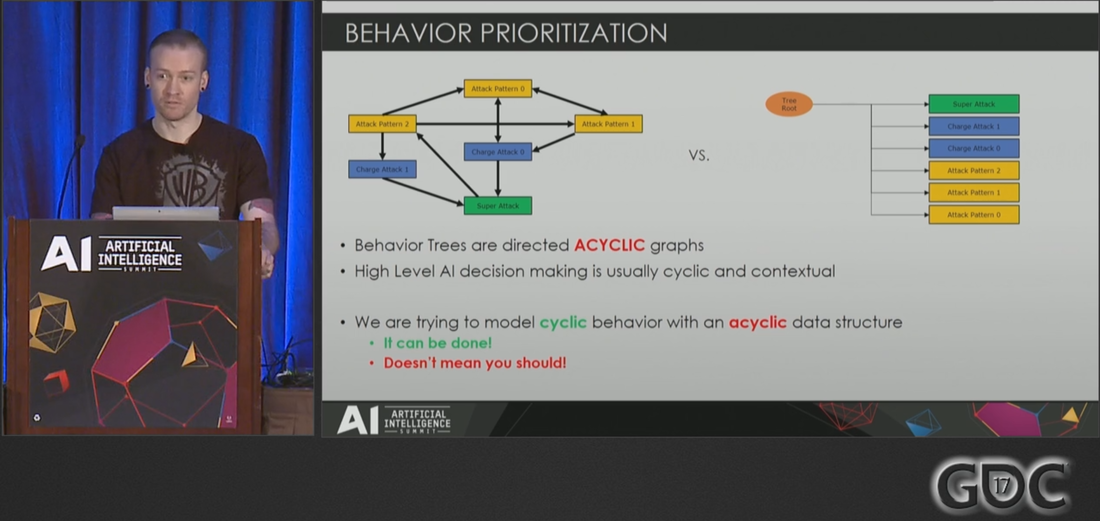

In the first post related to flexibility in behaviour trees I argued that behaviour trees and state machines can accomplish similar things, and that there were certain benefits to using each implementation method. I recently watched a GDC 2017 talk by Bobby Anguelov that redefined my perspective on these issues. Much of what I proposed in previous camera experiments models cyclic behaviour on cameras with behaviour trees, and I did not know why that should not be done until watching this talk. In fact, he suggests state machines are clearly better than behaviour trees at high level decision making. He provides examples where the acyclic data structure of behaviour trees complicate implementation and make it inefficient. However, Bobby does not think behaviour trees are all bad.

The main takeaway from his talk is to use behaviour trees only after high level decisions have already been made, where their ability for parallel execution of tasks can supplement the cyclic logic of state machines. The rest of this post describes how his approach would integrate with previous camera implementations I have discussed, and applies this new knowledge to guidance of future experiments with behaviour trees on cameras.

3 Comments

One 1998 Nintendo title made a permanent mark on cameras long before the third person action genre really took off - and it is not The Legend of Zelda: Ocarina of Time. While games like GTA III (2001) and Demon's Souls (2009) both have similar targeting systems to Ocarina of Time, these features were also present in GTA III's precursor Body Harvest (1998) so neither camera can claim as much influence. I am talking, of course, about Super Mario 64, whose success allowed other influential third person games like Resident Evil 4 (2005) and Gears of War (2006) to create their own revolutions. Remember that the N64 controller only had one control stick! The follow camera was a vast improvement from its contemporaries, which were often limited to static cameras as seen in Resident Evil (1996) and rail cameras like in Star Fox (1993). The revolutionary feature in this game is a camera that slowly rotates behind the player to adjust to their movements - notably causing avatars to run in circles when running towards the camera. If you have ever seen this in a game, chances are that camera is a "follow camera" that was inspired by Super Mario 64. There are two camera modes in Super Mario 64: Mario Mode and Lakitu Mode. My project, Super Maria 64, will focus on implementation of Mario Mode. See reference of a player completing the game in Mario Mode here. Lakitu Mode is preferred by many players and aged better with time, but it is basically another layer of features on top of the features in Mario Mode... so we will avoid that complexity. Goal: Go from a blank UE4 level to a prototype quality camera This post documents the first step towards the goal of the Super Maria 64 project, and provides readers with a quick mock-up of the camera lag seen in our reference. I will show video of the final result taken with Sequencer, as well as the blueprints.

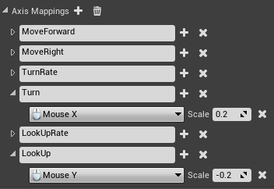

Reference: Mark Haigh-Hutchinson. 2009. "Real-Time Cameras: A Guide for Game Designers and Developers." Elsevier.  Scaling down the responsiveness in Editor Preferences. Scaling down the responsiveness in Editor Preferences. This post is the conclusion to a series intended to share knowledge I found in the resource John Nesky called the "only textbook" in the field of cameras for game design. The final nuggets of wisdom discuss fundamental aspects of any camera. Conveniently, I am beginning to build a new third person camera based on learnings from the Camera Experiments series, and these fundamentals are an excellent place to start - especially as I have neglected sharing them. or even thinking about them. for so long! For the purpose of keeping this discussion quick and to the point, I have chosen to focus on mouse control schemes only. This is a quick primer on how to start your own camera. Here are the final camera design guidelines from Haigh-Hutchinson's textbook, see the previous articles in this series for more of this wisdom.

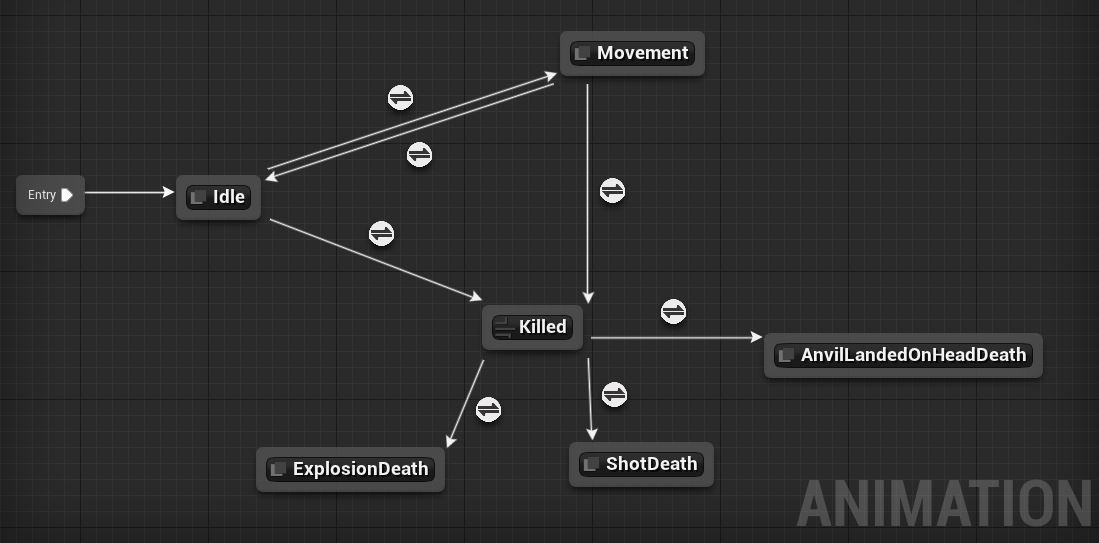

Animators who use Unreal Editor 4 are already familiar with state machines. Why can't those be used for cameras too? State machines' other benefits include:

When it comes to gameplay cameras, the quick answer to why to choose behaviour trees over state machines is flexibility. Flexibility, in turn, allows the underlying systems to be reusable across multiple projects - and therefore provides more sustainable development practices.

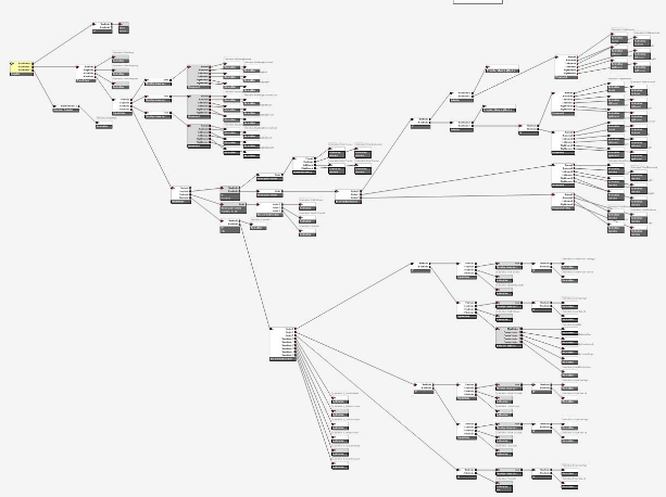

There are practical limitations to using the animation state machines for any camera system that allows real time control by the player. While it would be possible to rip apart the state machine graphical display elements and make their code work with camera behaviours, vanilla Unreal Editor 4 state machines are only compatible with skeletal meshes. This is a common limitation of state machine visual scripting modules because they are often streamlined for animation. As a result, we see similar limitations when using Unity's Mecanim: it is built for blending and transitions between animations that have exact definitions where cameras require adaptive behaviours to address their "fuzzy" constraints. Let's put the implementation of state machine modules aside and look for proof that behaviour trees bestow more flexibility for camera behaviours than any ideal state machines. The purpose of this experiment is to use nonfunctional examples to compare Unreal's implementation of Behavior Trees and State Machines for similar camera behaviours. The "Sense" and "Act" aspects of behaviours were not created. Our starting point for this experiment is any project with Animation Starter Pack, which can be added to your project for free from Epic Games in the Marketplace. |

James Dodge

Level Designer Categories

All

Archives

October 2021

|

RSS Feed

RSS Feed